- Blog

- About

- Contact

- Xampp control panel v3-2-1 download free 32 bit

- Crusader kings 2 all dlc torrent 2016

- Minecraft winrar download

- Wii isos onedrive download nicoblog

- Need For Speed Nfs Carbon Collectors Edition Repack Mr Dj

- Discord download chrome

- Puffin browser pc crack

- Pca column free download crack

- Ubuntu download free 64 bit

- How to use cheat engine 6-5-1 tanki online

- Start bluestacks download runtime data

- Dolphin emulator ps4 controller meac

- Download facebook video in high quality

- Loverslab fallout 4 replace cloth

- San juan puerto rico map

- Sims 3 kinky world willpowerless

- Download video from online streaming

- Artificial academy 2 lag 2018

- Aurora 5e character builder

- Spyder5elite problems with nvidia control panel windows 10

- Microsoft teams download meeting recording

- Google postman download

- How to find your adobe flash cs6 key

- Mp3 juice download music free download

- How to download windows 11

- The sims 3 cc toilet paper

It has always been my impression the only way to to get 10-Bit color depth was with a professional card. If NVIDIA prosumer/consumer GPUs will support 10Bit color the AMDs are heading for eBay.

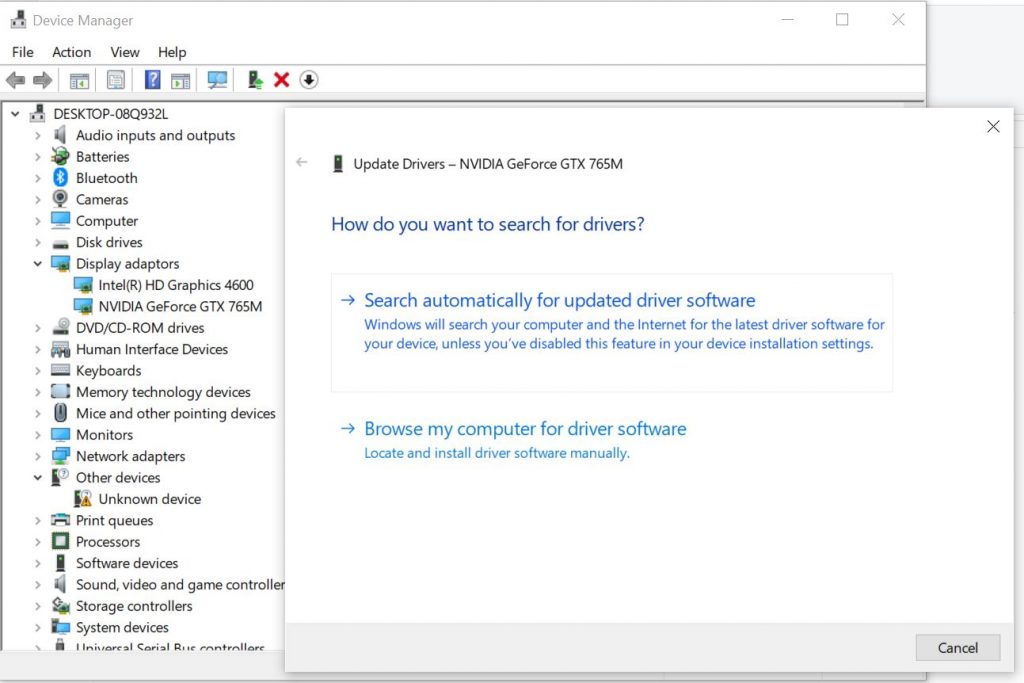

#SPYDER5ELITE PROBLEMS WITH NVIDIA CONTROL PANEL WINDOWS 10 DRIVER#

I could care less about a certified driver as long as it works. The others are 2X Vega Frontier Edition GPUs which do support 10-Bit color depth but the driver is not certified. One is a WX 8200 which has a certified driver. I do have a couple of AMD cards that are 10-Bit color but are disappointing from a performace standpoing. I am still in the process of getting things worked out. I upgraded two workstations right before the end of the year. It is important to seen on the screen exactly what prints. I also do some printing both inhouse and with custom labs for specility stuff. The combination I just mentioned renders a 40mb+ file in about 2.5 seconds from when the shutter is tripped until I see the image (currently using 2X 1080 Ti). I cannot set up for the next shot until the current shot is rendered and I see it. I use Capture One Pro 12.0 for a RAW processing engine and, normally, it works great and is fast. Meaning a Nikon D810 is connected to the computer via a USB 3 cable.

I bought the Titan RXT to go in an Intel Core i9 7900X for shooting tethered. Pardon the pun but I shoot firearms that are unique. I do product photography professionally that goes on the web.

A little more information on what I do and why.